Challenge 4: 10K Concurrent Viewers

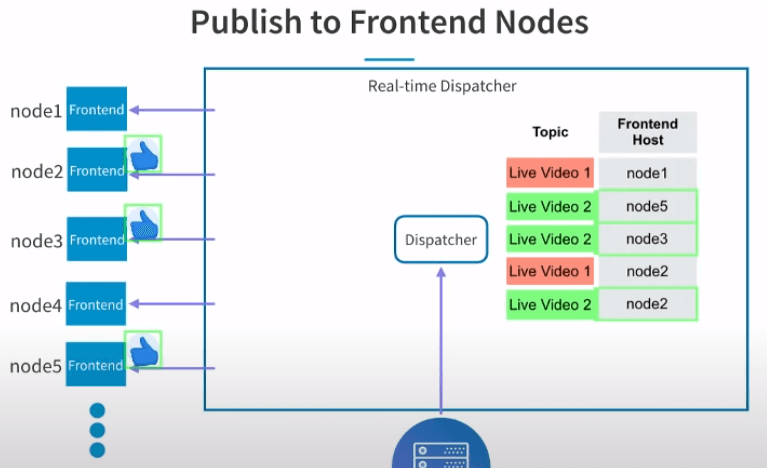

- Scale horizontally to handle more concurrent viewers -> Add multiple Frontend nodes and coordinate them using a Dispatcher node.

- In a similar fashion to the Frontend node, the Dispatcher has a subscriptions table to know which frontend nodes should receive which events.

- This table is populated when Frontend nodes send subscription requests to tell the Dispatcher which live videos they're interested in (i.e. which live videos its connections are subscribed to).

9

57 reads

CURATED FROM

IDEAS CURATED BY

Alt account of @ocp. I use it to stash ideas about software engineering

The idea is part of this collection:

Learn more about computerscience with this collection

Understanding machine learning models

Improving data analysis and decision-making

How Google uses logic in machine learning

Related collections

Similar ideas to Challenge 4: 10K Concurrent Viewers

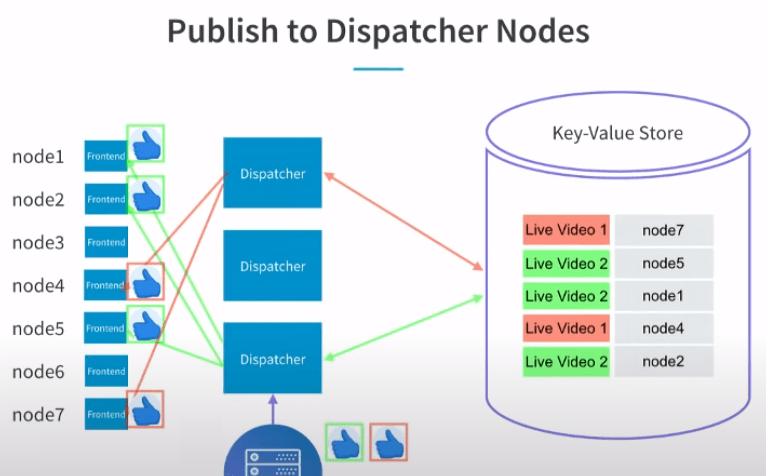

Challenge 5: 100 Likes/second

- Scale horizontally again to handle more events -> Add multiple Dispatchers and move the subscription table into a key-value store so it's accessible to all Dispatchers.

- Dispatchers are independent from Frontend nodes and don't have persistent connect...

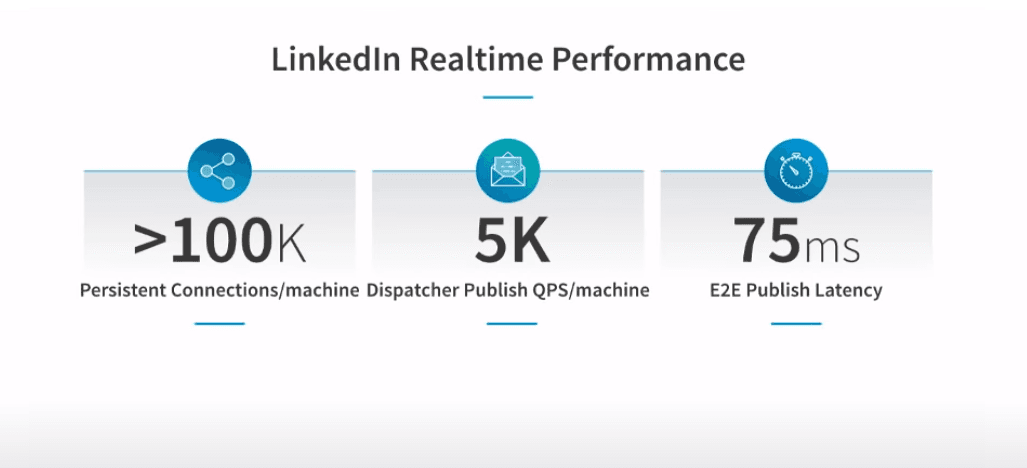

Performance and scale

- Each Frontend node handles 100k persistent connections. It handles only this many connections because the server is doing a lot of work processing multiple types of data (likes, comments, instant messaging etc.).

- Each Dispatcher can publish 5k events per seco...

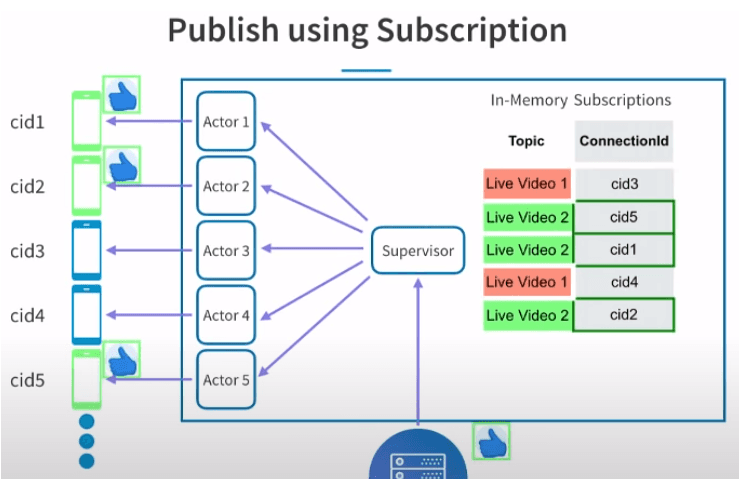

Challenge 3: Multiple Live Videos

- Clients are subscribing to events for a particular live video i.e. they are telling the server which live video they are watching.

- The Frontend server stores all subscriptions in an in-memory table.

- Every time a new event is published, the su...

Read & Learn

20x Faster

without

deepstash

with

deepstash

with

deepstash

Personalized microlearning

—

100+ Learning Journeys

—

Access to 200,000+ ideas

—

Access to the mobile app

—

Unlimited idea saving

—

—

Unlimited history

—

—

Unlimited listening to ideas

—

—

Downloading & offline access

—

—

Supercharge your mind with one idea per day

Enter your email and spend 1 minute every day to learn something new.

I agree to receive email updates