Matrix Decomposition

Matrix decomposition is about how to reduce a matrix into its constituent parts. It tries to simplify complex matrix operations on the decomposed matrix instead of the original matrix.

There are many ways to decompose a matrix using a range of different techniques.

294

1.34K reads

CURATED FROM

IDEAS CURATED BY

The idea is part of this collection:

Learn more about problemsolving with this collection

Understanding the importance of decision-making

Identifying biases that affect decision-making

Analyzing the potential outcomes of a decision

Related collections

Similar ideas to Matrix Decomposition

"The world is most interesting when we can see the complex patterns that connect its different parts to one another. And we can’t truly do that unless we look beyond the boundaries and the compartments of singular disciplines and singular ways of thinking about reality."

ZAT RANA

"Prioritize exercise"

Physical activity is key to maneging stress and improving mental health. And the best news is, there are many different kinds of activities that can reduce your stress.

- Join a gym, take a class, or exercise outside. Keep in mind that there are many different ways to get more physical ...

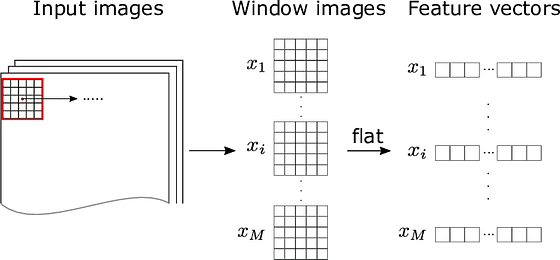

Feature extraction and suitable machine learning model

When dealing with large datasets with many columns and variables, feature extracting is used to divide and reduce existing data into a manageable group.

But for image processing, machines can't extract features such as edges, shapes, or even size in this way

Read & Learn

20x Faster

without

deepstash

with

deepstash

with

deepstash

Personalized microlearning

—

100+ Learning Journeys

—

Access to 200,000+ ideas

—

Access to the mobile app

—

Unlimited idea saving

—

—

Unlimited history

—

—

Unlimited listening to ideas

—

—

Downloading & offline access

—

—

Supercharge your mind with one idea per day

Enter your email and spend 1 minute every day to learn something new.

I agree to receive email updates