A Machine That Sees

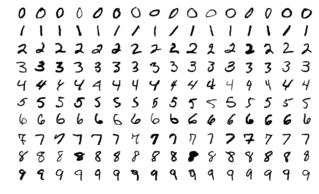

Let’s do a quick run through of how a machine could see. First let’s take the MNIST Dataset, a dataset of digits from 0 to 9:

Each digit is a 28 x 28 image, meaning there’s a total of 784 pixels in the whole image. We take our image and flatten it (instead of 28 x 28, we turn the shape into 784 x 1). We pop this guy into our neural network and on the other side we end up with our prediction !

30

348 reads

CURATED FROM

IDEAS CURATED BY

卐 || एकं सत विप्रा बहुधा वदन्ति || Enthusiast || Collection Of Some Best Reads || Decentralizing...

The idea is part of this collection:

Learn more about artificialintelligence with this collection

How to build trust and respect with team members

How to communicate effectively

How to motivate and inspire others

Related collections

Read & Learn

20x Faster

without

deepstash

with

deepstash

with

deepstash

Personalized microlearning

—

100+ Learning Journeys

—

Access to 200,000+ ideas

—

Access to the mobile app

—

Unlimited idea saving

—

—

Unlimited history

—

—

Unlimited listening to ideas

—

—

Downloading & offline access

—

—

Supercharge your mind with one idea per day

Enter your email and spend 1 minute every day to learn something new.

I agree to receive email updates