Quantum Eyes

Curated from: studentsxstudents.com

Ideas, facts & insights covering these topics:

14 ideas

·2.93K reads

17

1

Explore the World's Best Ideas

Join today and uncover 100+ curated journeys from 50+ topics. Unlock access to our mobile app with extensive features.

Sight. One of nature’s finest gifts.

The ability for an organ to take photons from the outsight world, focus them, and then convert them into electrical signals is pure awesomeness! But what’s even more awesome is the organ behind your eyeballs — the brain!

The brain is able to take those electrical signals, convert them into images, and then figure out things like, who that person is across the street or what those funny symbols mean. It’s a miracle that we’re able to see, but an even greater miracle is our ablity to grant that gift to machines . One way to do it is through Machine Learning.

35

383 reads

A Machine That Sees

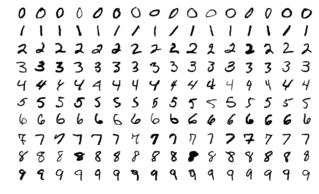

Let’s do a quick run through of how a machine could see. First let’s take the MNIST Dataset, a dataset of digits from 0 to 9:

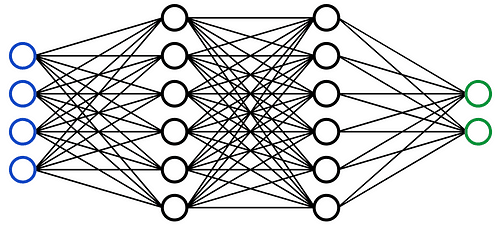

Each digit is a 28 x 28 image, meaning there’s a total of 784 pixels in the whole image. We take our image and flatten it (instead of 28 x 28, we turn the shape into 784 x 1). We pop this guy into our neural network and on the other side we end up with our prediction !

30

348 reads

Turns out this simple neural network is able to achieve an 88% accuracy ! That’s pretty impressive, considering that it’s just a bunch of linear layers and activations. But let’s try something even better…

29

357 reads

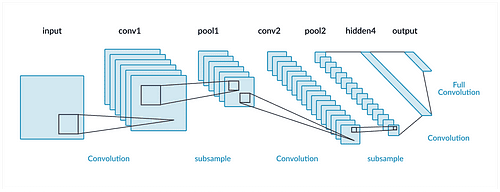

CNNs

CNNs are actually able to achieve pretty insane results — 99.75% accuracy ! The reason why CNNs’ incredible power is due to it’s ability to look at the surrounding pixels and based off of that, extract features and information from that patch of pixels. Normal NNs can’t do that, so they’re a bit less powerful.

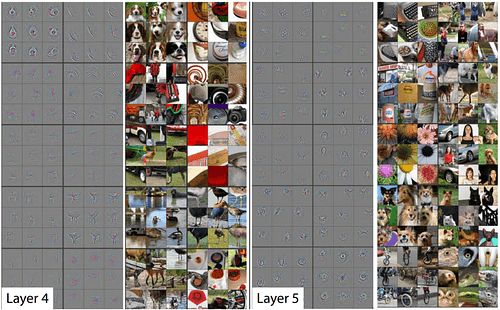

CNNs look like this:

The 3 main parts of a CNN are

Convolutional Layers : These are the layers which have kernels, they're really good at extracting features from the image and passing it on

31

292 reads

...

Max Pooling Layers : These layers reduce the feature map (the size of the features), reduce the computational resources needed and prevents overfitting

Fully Connected Layers : They appear at the end of the CNN, they’re just linear layers which takes the results of the CNN as inputs and outputs the lable

That’s the architecture, but the really amazing part of a CNN are it’s kernels inside it’s convolutional layers! These kernels are only matrixes (basically a bunch of numbers sorted in a box), but they’re able to extract tons of features about the image.

31

233 reads

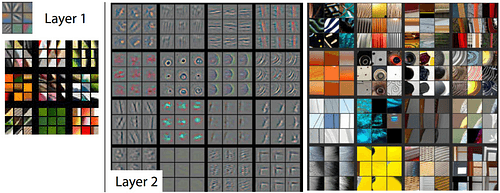

A Bit Detailing

By the first layer the kernels can start telling which images have verticle lines, horizontal lines and different colors. By layer 2 you can put those features together and form more comple shapes like corners or circles.

Layer 3 becomes even cooler! Repeating patterns, car wheels and even humans.

And as you stack your convolutions more and more you get more and more complex features — that’s insane becuase CNNs are able to extract those meta structures of the image.

28

190 reads

....

By extracting those features you can put them in a neural network and classify your image! But as cool as that sounds, there are 2 Achilles heels:

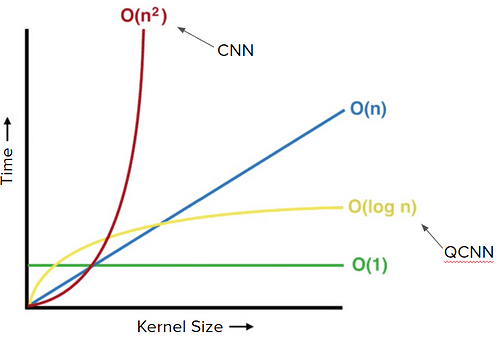

- When input dimensions increase exponentially, the time it takes to run the CNN also becomes exponential . An example of a problem like that would be when you’re modeling quantum systems to classical computers, the input space grows exponentially

- As you increase the kernel size, the time it takes to run the CNN becomes exponential

But Quantum’s here to the rescue!

28

175 reads

Quantum Convoluional Neural Networks

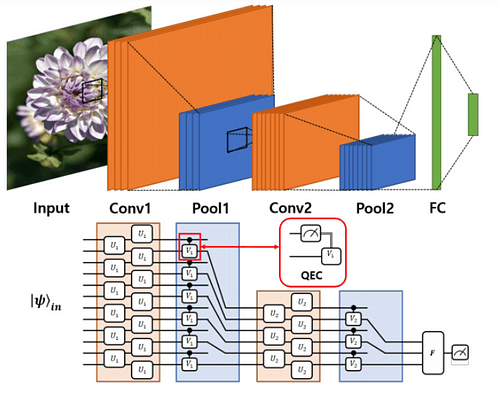

To tackle the first problem, we could just let qubits represent the quantum system! Introducing: Quantum Convolutional Neural Networks .

This is a neural network that literally replicates the whole CNN architecture. C onvolutional layers and max pooling are all translated to the quantum realm!

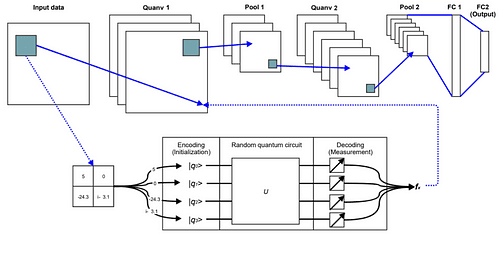

The architecture itself looks like this:

28

175 reads

And it has the familar parts of your classical CNN:

1.Convolutional layers : Instead of kernals, you have gates that are applied to the qubits adjacent to it

2.Pooling Layers : Where you just measure half of the qubits and kick out the rest

3.Fully Connected Layer: Just like the normal one

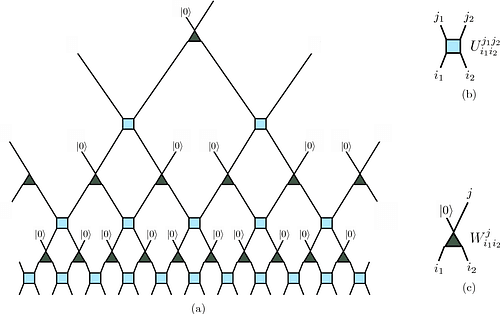

If you’re a super quantum nerd you might have noticed that this architechture might have some resemblence to a reverse MERA (Multi-scale Entanglement Renormalization Ansatz).

A normal MERA takes 1 qubit and then exponentially increases the number of qubits by introducing new qubits into the circuit.

But in the reverse MERA, we’re doing the

29

143 reads

Reverse MERA And QEC

opposite by exponentially decreasing the number of qubits.

The bottleneck here is the range of possible qubits which the reversed MERA can reach.In other words, the QCNN might not be able to produce the labels. But we can overcome this by implementing Quantum Error Correction(QEC),the thing you see in the red box in the diagram.

This architecture works super well with problems that require a small input size,since our current quantum computers can’t hold that many qubits.But what if we wanted to use QCNN’s for image classifiction or other problems that need more input space?

29

128 reads

Quanvolutional Neural Networks

A Quanvolutional Neural Network (QNN) is basically a CNN but with quanvolutional layers (much like how CNN’s have convolutioanl layers). A Quanvolutional layer acts and behaves just like a convolutional layer!

Much like a normal convolutional layer, we take a subset of the image, pass it through our kernal , and then it outputs a part of the new image. We do this over and over again until we reach the linear layer, which classify’s the image.

Since it acts so much like a normal Convolutional layer, you could literally use both a QNN and a CNN together in the same model!

28

123 reads

Advantages

- Noise Resistant : With Quantum Error Correction, along with it’s quantum nature, QNNs are resistant to constant noise. In current quatnum computers, there’s always a constant noise / error that occurs in quantum gates, but QNNs overcome it

- Better Results : In this paper , researchers use QNNs to diagnose Covid just from chest X-rays! They obtained a 98% accuracy, 2% better than standard CNNs!

- Faster Training Time : Classical CNNs have O(n²) time, where n is the kernal size. QNNs have O(log(n)) time! That’s an insane speedup!

27

122 reads

Disadvantages

- Many Executions Needed: Since we have to stamp the kernal all over the image, and do this to the potential of thousands of images, you’re going to have to run run the quantum circuit a lot. Current quantum computers aren’t able to handle that many executions

- Difficult to optimize : Inside QNNs there are things call encoding protocals . These protocals are very difficult to optimize. Researchers don’t know which protocols are good, useless or even counter productive!

25

126 reads

The World Is Changing

These new Quantum Machine Learning algorithms are but a testament to what there is to come. Even though quantum computers are at it’s infancy, we have already seen these new QML algorithms which are already outperforming our old ones!

Many of our existing ML algorithms could be translated into QML algorithms, allowing them take avantage of new properties like entanglement, superpostion and insane parallel computing! It’s only a matter of time before these algorithms are changing the world!

25

137 reads

IDEAS CURATED BY

卐 || एकं सत विप्रा बहुधा वदन्ति || Enthusiast || Collection Of Some Best Reads || Decentralizing...

अर्हम् Arham's ideas are part of this journey:

Learn more about artificialintelligence with this collection

How to build trust and respect with team members

How to communicate effectively

How to motivate and inspire others

Related collections

Similar ideas

16 ideas

What is a Quantum Convolutional Neural Network?

analyticsindiamag.com

1 idea

What Is Deep Transfer Learning and Why Is It Becoming So Popular?

towardsdatascience.com

Read & Learn

20x Faster

without

deepstash

with

deepstash

with

deepstash

Personalized microlearning

—

100+ Learning Journeys

—

Access to 200,000+ ideas

—

Access to the mobile app

—

Unlimited idea saving

—

—

Unlimited history

—

—

Unlimited listening to ideas

—

—

Downloading & offline access

—

—

Supercharge your mind with one idea per day

Enter your email and spend 1 minute every day to learn something new.

I agree to receive email updates